3 Ways Artificial Intelligence Is Learning to Show Emotions

News |

Artificial Intelligence (AI) is no longer limited to data processing and logical decision-making.

Modern AI systems are increasingly designed to understand and respond to human emotions, making interactions more natural and intuitive. While AI does not possess genuine feelings, it can simulate emotional awareness through advanced analysis and adaptive communication.

1. Emotion recognition through voice, text, and facial expressions

One of the most significant advancements in AI is its ability to detect emotional cues. By analyzing tone of voice, speech patterns, word choice, and facial expressions, AI systems can infer a user’s emotional state.

This capability is widely used in customer support, mental health platforms, education technologies, and automotive systems that monitor driver stress or fatigue.

2. Emotion-aware language and contextual responses

Modern language models are trained not only on what to say, but how to say it. AI can adjust its tone to be empathetic, neutral, or encouraging depending on the situation.

This context-sensitive communication helps users feel understood and supported, enhancing trust and engagement during interactions.

3. Personalization based on emotional feedback

AI systems learn from user behavior and emotional responses over time, allowing them to tailor their communication style. This includes adjusting formality, level of detail, and emotional tone based on individual preferences.

Such personalization is particularly valuable in education, digital assistants, and healthcare solutions, where effective communication plays a critical role.

The growing emotional awareness of AI reflects a broader shift toward human-centered technology, where efficiency is combined with understanding to create more meaningful digital experiences.

Follow us in social networks

-

Control the Direction, Not the Situation: The Role of Adaptability in Business

2026/03/18/ 18:13 -

IDBank issued the 2nd and 3rd tranches of bonds of 2026

2026/03/17/ 16:42 -

The Fourth Doing Digital Forum to be Held Under the Theme

2026/03/17/ 14:34 -

When work breaks your heart: how to overcome professional crises

2026/03/17/ 11:57 -

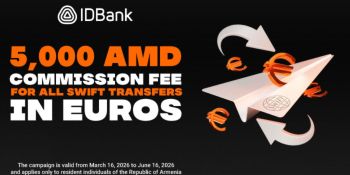

IDBank Launches Special Campaign for SWIFT Transfers

2026/03/16/ 18:42 -

Pay with Evoca Mastercard and get 10% cashback

2026/03/16/ 17:00 -

Change in the Executive Management of Converse Bank

2026/03/16/ 14:40 -

Converse Bank shares its capital market expertise at the IV Conference Capital Markets Armenia

2026/03/16/ 14:31 -

Investment Offer for Women

2026/03/13/ 18:31 -

Safe Workplace as a Guarantee of Development

2026/03/12/ 17:43

Subscribe

Subscribe